Register for my upcoming The Marketing Engineer: Vibe Coding for Marketers course on Maven

Unmasking bias: Peering through the looking glass of image generation tools

Natalie Lambert

5/29/20235 min read

Let me tell you a story. This is a true story and one that opened my eyes to the bias in AI tools. The tool itself doesn’t matter as I believe all tools have a level of bias, whether intentional or not. The reality is that these tools are a reflection of their training data and what I experienced is likely a result of a subconsciously biased dataset (which I believe is being adapted and improved every day).

For those who have been following my Gen AI journey, you know I have been playing with as many tools as I can get my hands on to understand the use cases and best practices for using AI in marketing. This stop on the journey is about my experience with image creation tools.

Let me preface everything by saying that I find these image creation tools incredibly cool. I will continue to explore different use cases to find ways they can supercharge marketers. Unfortunately, to date, I have not been able to use the output in my work as getting images to have the look and feel I need (likely a prompting problem) and align with brand guidelines has been a challenge. But it was in a moment of frustration that I had an idea: I would create AI-generated headshots of each speaker at an upcoming event. There aren’t typically brand guidelines around headshots, so it seemed like a fun idea to bring AI-generated content to our event in a creative way. It was also somewhat poetic given the event was about AI

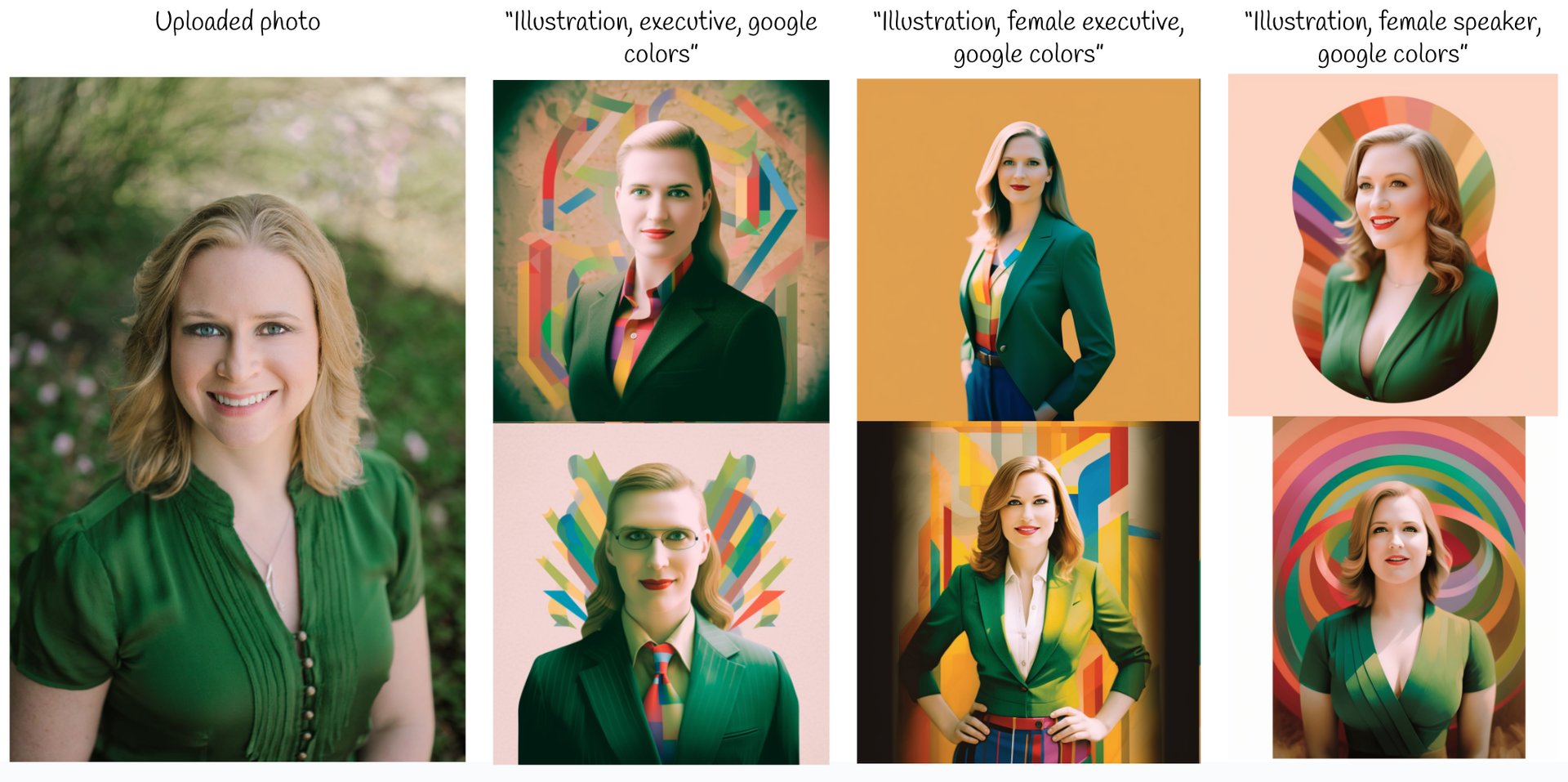

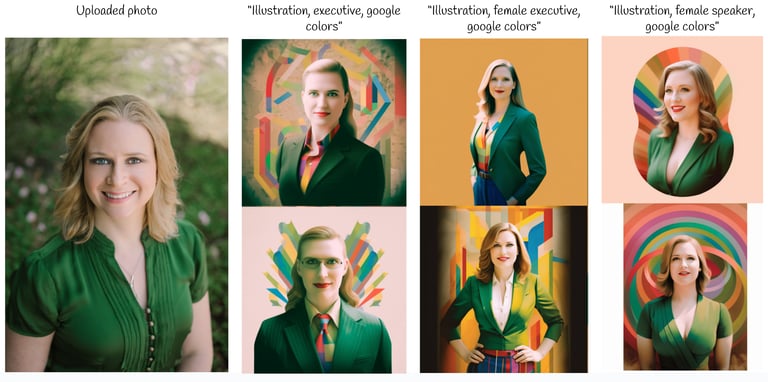

I first had to upload a current photograph so we had a basis for the new headshot. I then played with the prompts to get a style I liked. I started with prompts focusing on a) the colors (red, blue, green, and yellow); and b) the style (look like an illustration). It was all pretty intuitive and ultimately a prompt of “illustration, Google colors” worked well. Yes, it was that simple of a prompt.

I then applied this format to the actual photographs of the speakers. I used headshots of the men first. I wanted them to look professional, so I added the word “executive” to my prompt. While the results may not have been a spitting image of the person (and in some cases, not even close), they did look like cool illustrations of a business executive with large similarities to the photo of the speaker I uploaded.

I then created the headshots for the women. I used the same prompt as I had for the men: “illustration, executive, Google colors.” It created the same style image, but there was a glaring issue: most of the images were of men with long hair.

I tried again, this time clarifying that I wanted the image to be a “female executive”. The result was what I wanted. While I was a bit disturbed that this clarification was required, I was on a deadline and I had a prompt that worked. I used it for the remaining images and completed my task. In the end, the images looked great and the team asked me to create more. It took mere minutes as I had a working prompt.

Then came the part of this story I did not expect. One of the speakers pointed out that all of the AI-generated headshots were of people in suits, including the women — in one case, the woman was wearing a tie. It was true and I hadn’t even noticed! As a woman in tech and one who has been an executive, I despise suits and I simply don’t wear them. How did I miss this?

I iterated with the tools to figure out how to prevent the women’s illustrations from automatically being in suits. It turns out “female speaker“ did the trick. Below is the progression of photos I created using the same prompts as cited above, with my LinkedIn photo as the basis:

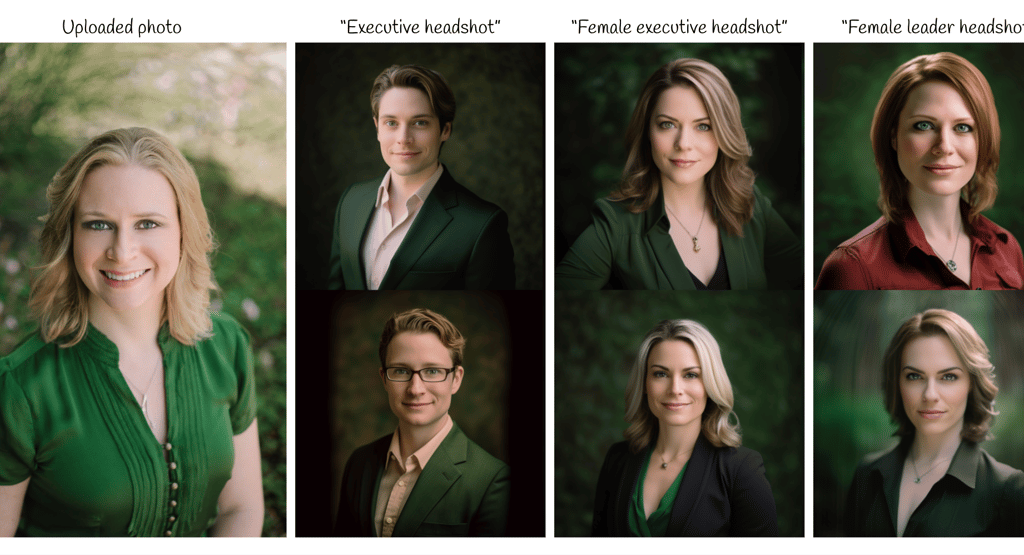

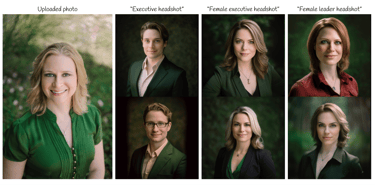

Now I was fascinated by what was happening. So I tried again, removing any creative liberties that the “illustration” and “google colors” portions of the prompt might have added to the resulting image. I recreated this experience with a very simple prompt to see if the same results occurred:

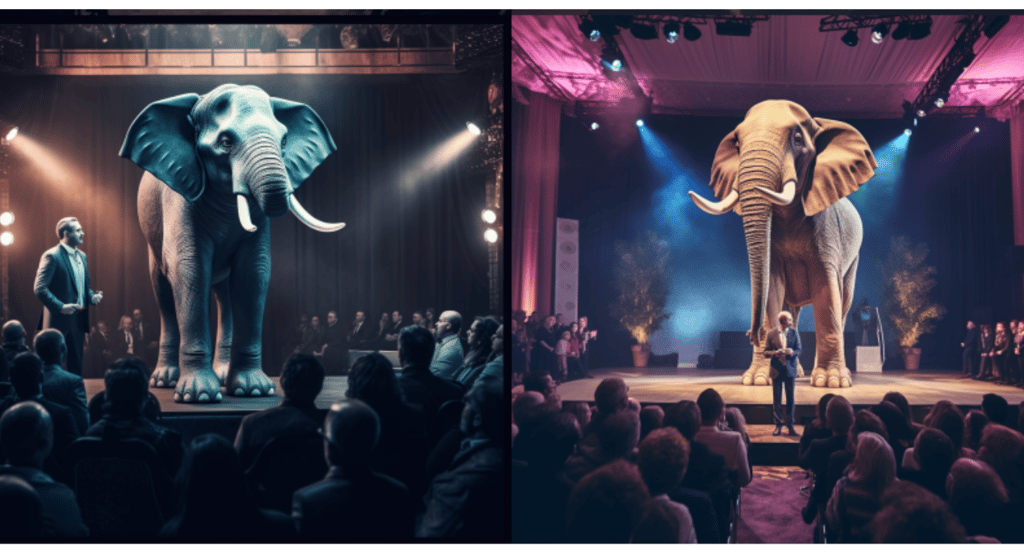

Let’s dig into this entire experience. Initially when creating these images, the bias in the tool was to make all “executives” look like men or have strong male characteristics — this bias showed up in approximately 80% of the close to one hundred images I created. It took a clarification in the prompt to reorient the tool to an “executive” being someone other than a man. But even then, it had women wearing more traditional male clothing. Again, it took a modified prompt to get the resulting image to be of a woman in a more traditional female outfit. This leads me to believe that the training data for this tool includes a significant number of men in suits with an “executive” tag. Thus, to this image creation tool, “executive” equates to a man. I then completed a non-scientific test. I asked the tool to create an image with the following prompt: “executive speaking at an event, on a stage, with an elephant standing on the back of the stage.” Any guesses for the gender of the exec it created? Yep, a man (in a suit)!

Now, the moral of my story. It’s not to stop using these incredible tools. Rather, you must examine the results carefully for hidden biases that you may not see at first glance. I have used five image creation tools and while this exact bias did not exist in all of them, I have noticed certain races, body types, and genders being created at higher rates with some prompts over others. I'm disappointed I didn’t notice these things at first, and was glad someone pointed it out to me. This example also confirms my beliefs, as noted in my previous post, that AI needs a human partner to create amazing work. When left to its own devices, I don’t trust the results from generative AI tools.

I am curious, have you found bias in the AI tools you have played with? What was it? Did you notice it immediately? Finally, did you report it?

I don’t know how to report this, but it’s clear there needs to be an easy way to do this. I would love AI vendors to provide a place within their tools or on their websites that clearly gives users a way to submit examples of potential bias. Together, we can make generative AI tools work better for all of us.

This blog was also published on my LinkedIn profile.